Your pilot crushed it in testing. Applause all around.

Three months later? Adoption is poor. Workarounds everywhere. Customers annoyed. Leadership asking where the $2M went.

The good news? You’re not alone.

The MIT NANDA group’s recent State of AI in Business 2025 report argues that while Gen AI adoption is high – due to AI chatbots – business transformation and ROI is remarkably low.

The authors point to a cause: most tools don’t remember, don’t learn, and don’t fit the actual workflow.

The result, 95% of pilots are failing – at least among the 300 business leaders the NANDA group interviewed.

What the winners are doing differently tracks against our own hard-won experience:

- Map the whole process (not just the fun parts)

- Determine the specific parts that benefit from AI

- Design clean handoffs where AI ends and human judgment begins

- Measure the business outcome, not the model metric

This mirrors what the MIT group sees as organizations on the “right side” of the Generative AI divide; they’re adopting adaptive, embedded systems that learn from feedback and integrate deeply into workflows.

What we’ve seen in the field

- Retail support rollout: Answers were accurate, but refunds still required three disconnected systems. Satisfaction dropped ~40% and AI took the blame.

- Logistics control tower: 92% accurate delay predictions… and no playbook to act. Alerts became noise.

- Insurance claims revamp: Our team first mapped the claims path, coded the recurring manual work, then layered AI into the existing system to automate that tedious work no-one wanted to do. Result: 3× ROI in year one – not from “smarter AI,” but from better workflow mapping.

These patterns line up with MIT’s data: only ~5% of custom enterprise AI tools make it to production; most demos look great but break at brittle handoffs and misaligned workflows.

What’s the antidote?

What we find works is performing extensive process mapping before embarking on any AI transformation:

- Map every step end-to-end

e.g., Gather data → Format in Excel → Draft → Send → Wait → Revise → Publish - Mark repetitive, rule-based work with ✦ (i.e. the parts AI will accelerates)

- Mark judgment calls with ★ (i.e. where humans add value)

- Circle the handoffs between ✦ and ★ (this is where things break)

- If your map shows slow, messy handoffs, AI won’t fix it. Redesign the flow.

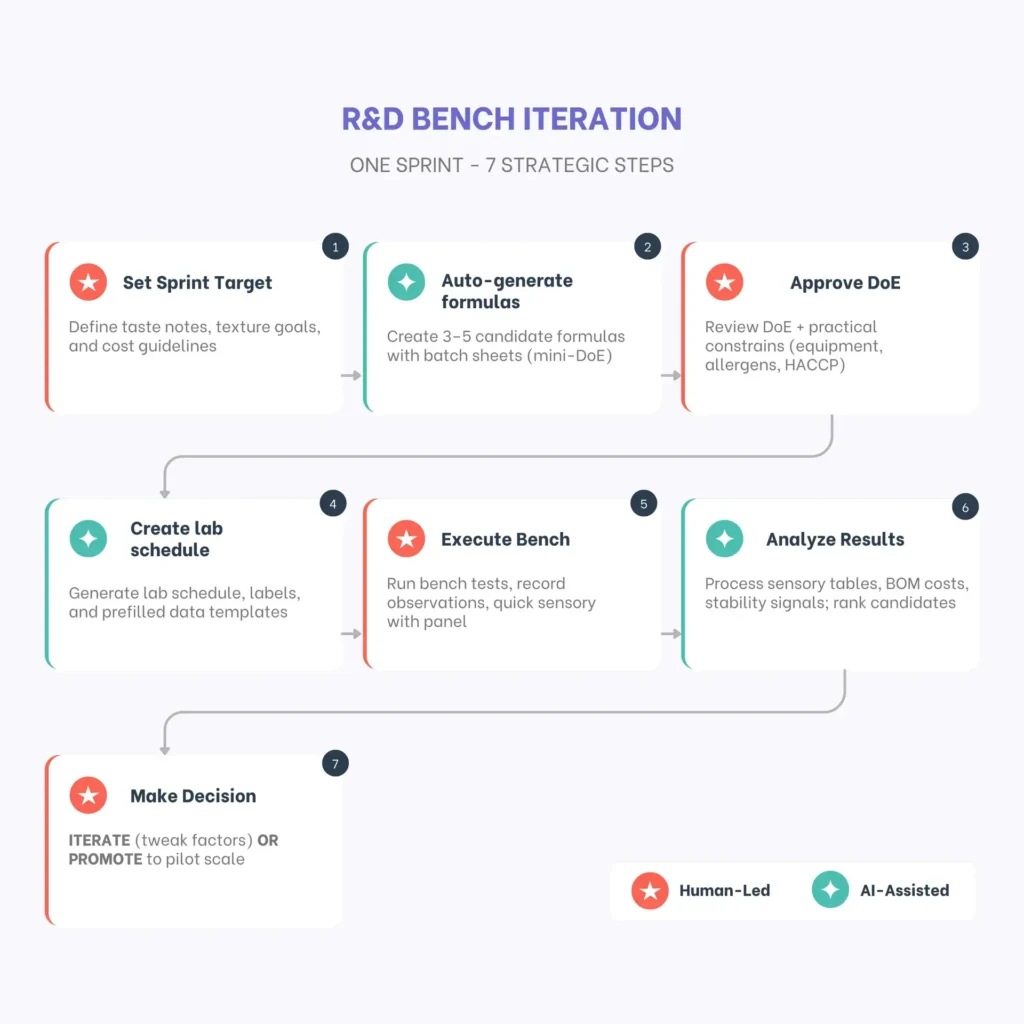

Here’s an example:

AI (✦) accelerates the boring parts while humans (★) handle taste, safety, and go/no-go decisions.

Why have so many human handoffs?

As per MIT, AI already “wins” simple tasks (e.g., drafting emails), but for multi-week, high-stakes work humans are preferred ~9:1.

The punchline?

Don’t buy “smarter AI.”

Design better workflow that work with AI, so your AI (✦) / humans (★) handoffs stay clean and value keeps compounding.

Two other data points that were eye-opening for me (from MIT NANDA):

- Shadow AI is real: Only ~40% of companies have official LLM subscriptions, but employees in ~90% of companies use personal AI for work—proof that flexible tools in good workflows get adopted.

- Budgets chase visibility, not ROI: ~70% of spend goes to Sales/Marketing because it’s easy to measure, while back-office work (where much of the ROI sits) stays underfunded.

Let me know if you’d like me to share the MIT NANDA report with you.